The Problem

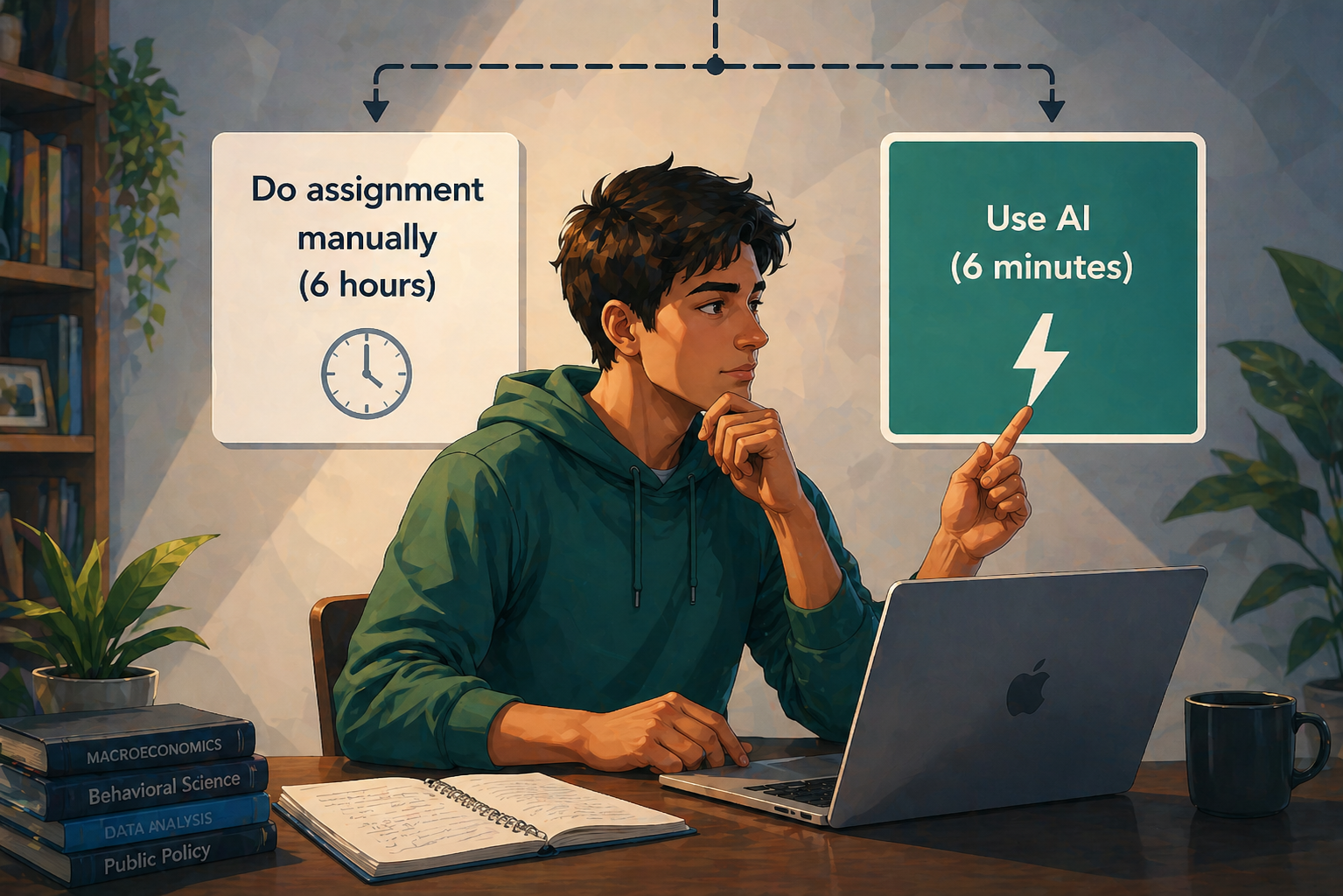

Students are using AI because it works. Not because they're lazy, not because they want to get away with something, but because they're doing exactly what any rational person does when a faster tool is available. You use it.

Think about what college actually is from a student's perspective. You're spending four years and somewhere between fifty and two hundred thousand dollars to prepare yourself for a workforce that runs on AI. Every job posting mentions it. Every hiring manager expects it. The skills that will determine whether you thrive or struggle professionally are being shaped right now, in these years, in these courses. And a significant portion of those courses respond to this reality by banning the most important professional tool of the next thirty years.

That's worth sitting with for a second.

"The student who sprints to ChatGPT the moment an assignment drops isn't broken. They're making a reasonable calculation."

The student who sprints to ChatGPT the moment an assignment drops isn't broken. They're making a reasonable calculation. Maybe the assignment feels disconnected from anything real. The AI gets it done. They move on to the three other things due this week. Nobody who has been a college student recently finds this hard to understand.

Here's what actually drives students. Not the desire to cheat. Not the desire to drive their professors crazy. What's fueling them is the desire to not waste time on things that feel pointless. Those are completely different problems and they require completely different responses.

The universities treating them as the same problem are making everything worse.

Why Every Current Response Misses the Point

The institutional response to student AI use has been, to be direct about it, embarrassing.

I don't believe AI policies that ban ChatGPT are stupid. Of course the people who wrote them were genuinely trying to preserve something real. They're trying to preserve the idea that the work should come from the student. Understandable instinct. Wrong execution. A policy banning an LLM is enforced by exactly nothing. Students know this. The policy exists. The tool exists. One of those things is going to win, and it isn't the PDF in your syllabus.

Then there are the cargo culting solutions. The "AI tutors" that hint at answers without giving them away, designed to keep students engaged without doing the work for them. Good luck. The student opens that tab, sees it isn't going to just answer the question, closes it, and opens ChatGPT. That whole product category exists because someone in a boardroom believed students are feeling the pain point the same way institutions are. They aren't. Students won't accept a worse tool, even if the branding was right.

"They won't. They never will. Banning AI in assessments while leaving assignments completely unchanged solves nothing. It just adds a rule that nobody follows to a system that was already broken."

The Real Insight

Here's the reality. The problem isn't that students use AI to help them. The problem is that assignments were designed to measure effort, and effort can now be outsourced. Those are two completely different failures, and conflating them is why every proposed fix lands wrong.

Of course students are going to use the best available tool. That's not a character flaw. That's what people do. The question a university should be asking isn't how to stop them. It's whether the assessment can still tell you anything meaningful once they do.

Right now the answer is no. You assign a paper. The student uses AI. You receive a paper. Nothing in that sequence tells you whether any learning occurred. Remember what we said at the beginning — the student making a rational calculation about their time? That calculation is rational precisely because the assignment gives them no reason to actually engage with the material. There's no moment where they have to prove they understood it. There's no follow-up. There's no conversation. Just a submission and a grade.

The assessment was supposed to create that moment of proof.

Maybe it did. It doesn't anymore.

What Actually Needs to Happen

Students aren't the ones who need to change. The assessment is the one that needs to change. You have to innovate.

A student who uses AI to draft an answer and then has to defend that answer out loud — explain the reasoning, respond to a follow-up, walk through how they got there — is a student who has to actually know the material. You can't outsource that. There's no tool that does your understanding for you in real time when someone is asking you to explain yourself.

That's what the oral exam always did. It separated the students who understood from the students who could produce. Law schools know this. Medical schools know this. The rest of higher education let it go because asking a hundred students to explain themselves individually takes more time than most professors have in a week.

Prova makes that conversation happen at scale, after every assignment, without consuming the professor's week. Not as a punishment. Not as a gotcha. As the part of the process that should have been there all along.

Students aren't the enemy of learning. A system that asks nothing of them is the enemy of learning.

The student opening ChatGPT isn't the problem you need to solve. The problem is that your assessment has no way of knowing the difference between a student who understands and a student who doesn't. Fix that, and the tool they used to draft the answer stops mattering.