The Problem

There are two students sitting in your class right now. You probably can't tell them apart yet.

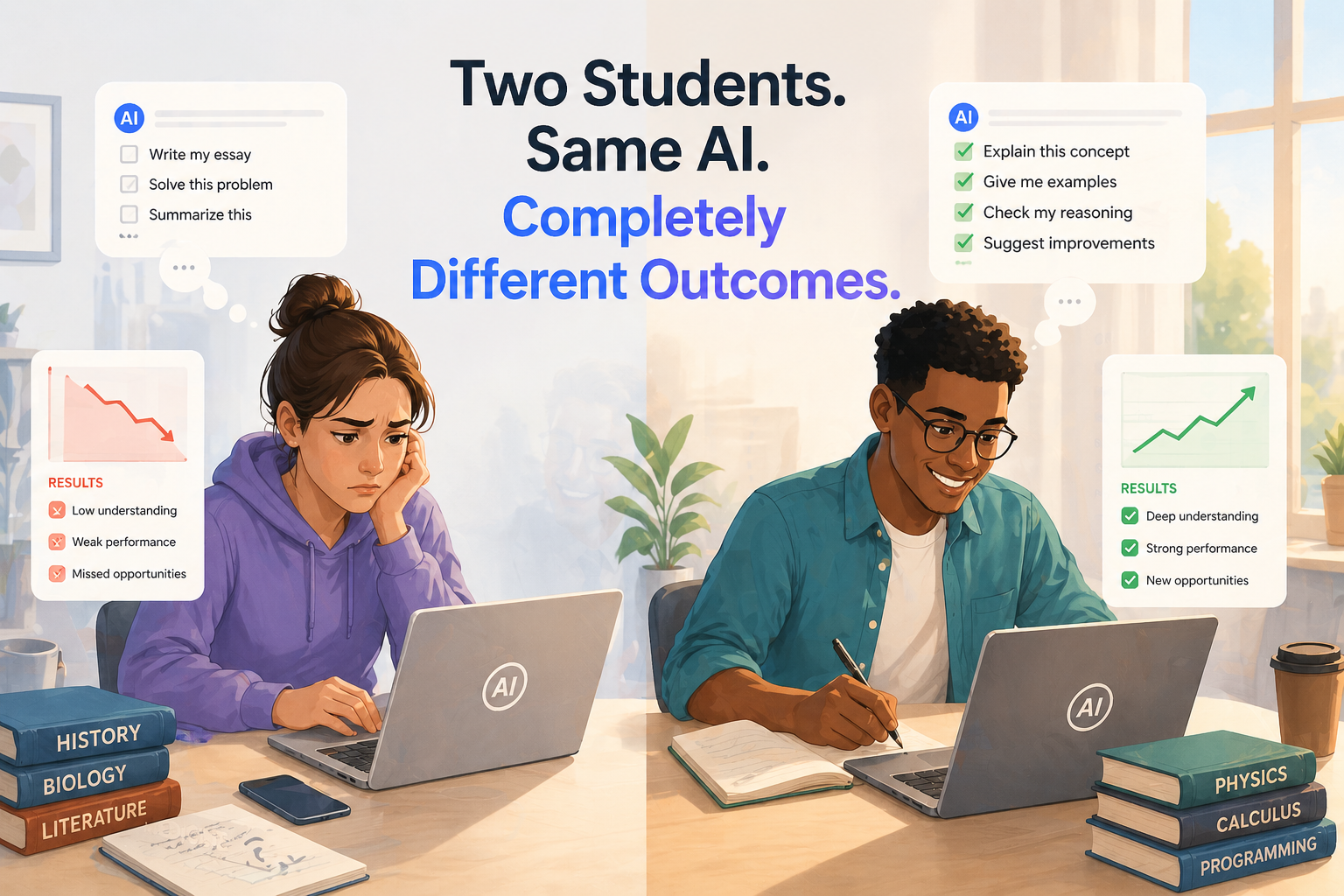

The first one gets an assignment. Understands it. Opens ChatGPT. Chats through what they need to do. Reads the output, thinks about it, pushes back on the parts that seem off, rewrites sections in their own words, and spends the remaining time actually understanding what they submitted. They use AI the way a good researcher uses a library. It's as a starting point, it's a compass, and an advisor. It's not a destination. They're building something real.

The second student gets the same assignment. Opens ChatGPT. Pastes the prompt. Copies the output. Changes a few words. Submits it. Done in eleven minutes.

Both submissions land in your inbox looking roughly the same. Both get grades. Both students move to the next unit. The transcript doesn't distinguish between them. The degree won't either.

"Here's where it gets serious. That gap, though invisible right now, compounds every semester."

Here's where it gets serious. That gap, though invisible right now, compounds every semester. The first student is developing judgment, building the ability to work alongside AI rather than hide behind it, accumulating actual understanding they can draw on when something unexpected happens at work. The second student is accumulating nothing except the habit of avoiding hard thinking.

Then they both graduate from the same institution, with the same degree. And one of them is going to struggle in ways nobody warned them about, because nobody ever asked them to actually explain themselves.

That's not a small problem. That's a failure of the institution's core purpose.

Why the Current System Can't See the Difference

The tragedy is that universities have everything they need to catch this early. They just aren't using it.

Grading rubrics aren't wrong as a concept. The people who designed them wanted consistent, fair evaluation. This is a good instinct. The problem is that a rubric grades the artifact, the finished product, the output. They do not grade the understanding behind it. A well-structured argument gets full marks whether it came from genuine comprehension or a language model that's very good at sounding like genuine comprehension. The rubric itself can't tell. It's not designed to read comprehension, it's designed to read outputs.

Sure, we know that office hours exist. Professors can, in theory, talk to students and find out what they actually know. This may occasionally work in a class of 25. In a class of 60, it's a fantasy. The students who need the most intervention are usually the ones least likely to show up voluntarily. They don't want to, are intimidated, and only the most courageous will go out of their way to.

"End-of-semester exams may catch this, sure. But by then the pattern is set, the habits are formed, and the student who spent four months avoiding real thinking is now trying to cram for a test that covers material they never actually processed."

Disturbingly, there's no system right now that looks at a submission and asks the student to account for it. That gap is where the second student lives, semester after semester, completely invisible.

The Real Insight

Now, why does this matter more than another story about academic integrity? Because this isn't an integrity problem. That framing is wrong and it leads to unhelpful solutions.

The second student isn't necessarily a bad person. They're a rational one. The system never required them to understand. It required them to submit. Of course they optimized for submission. That's what the incentives rewarded. If you reward outcomes, the student will find the path to the best possible outcome. Conversely, if you reward processes, students will chase better processes.

The first student learned in spite of the system, not because of it. Their curiosity or their work ethic or their anxiety about the future pushed them to actually engage. But the sad reality is the institution had almost nothing to do with it. It can't even take credit from the student learning and becoming successful. And, worse, it bears significant responsibility for the student who didn't, because it never once asked that student to prove what they knew.

The oral exam always solved this.

Ask a student to explain their work and you find out in three minutes which type you're dealing with. Medical schools know this. Law schools know this.

The oral exam always solved this. Ask a student to explain their work and you find out in three minutes which type you're dealing with. Medical schools know this. Law schools know this. The problem was never the method — it was that one professor cannot orally examine more than 40 students after every assignment. That constraint is what let the second student disappear into the system for four years.

That constraint is solvable now.

What the Institution Actually Owes Both Students

The student who is using AI productively deserves a system that recognizes that and rewards it. Not just with a grade on the output, but with the chance to demonstrate the understanding they actually built. Give them the follow-up questions. They'll answer it well and they'll know you know it. This will open more doors for this student than are currently available to them.

The student who isn't proactive in their studies should be caught early. Not punished. Caught. Redirected before the habit calcifies, before the gap between their credential and their actual capability becomes a problem they carry into their career and can't explain. The goal isn't to discipline them, but to help them by understanding their weaknesses. Only by understanding one's weaknesses can we help them strengthen up.

That's what asking students to explain their work does. Prova puts that question after every assignment, automatically, without consuming the professor's week. Both students get asked. Both students get seen.

The institution's job is to produce graduates who can perform. Right now it's producing transcripts. It's rewarding both of these students and calling it equal. It isn't equal. It was never equal. The problem was nobody was paying proper attention.