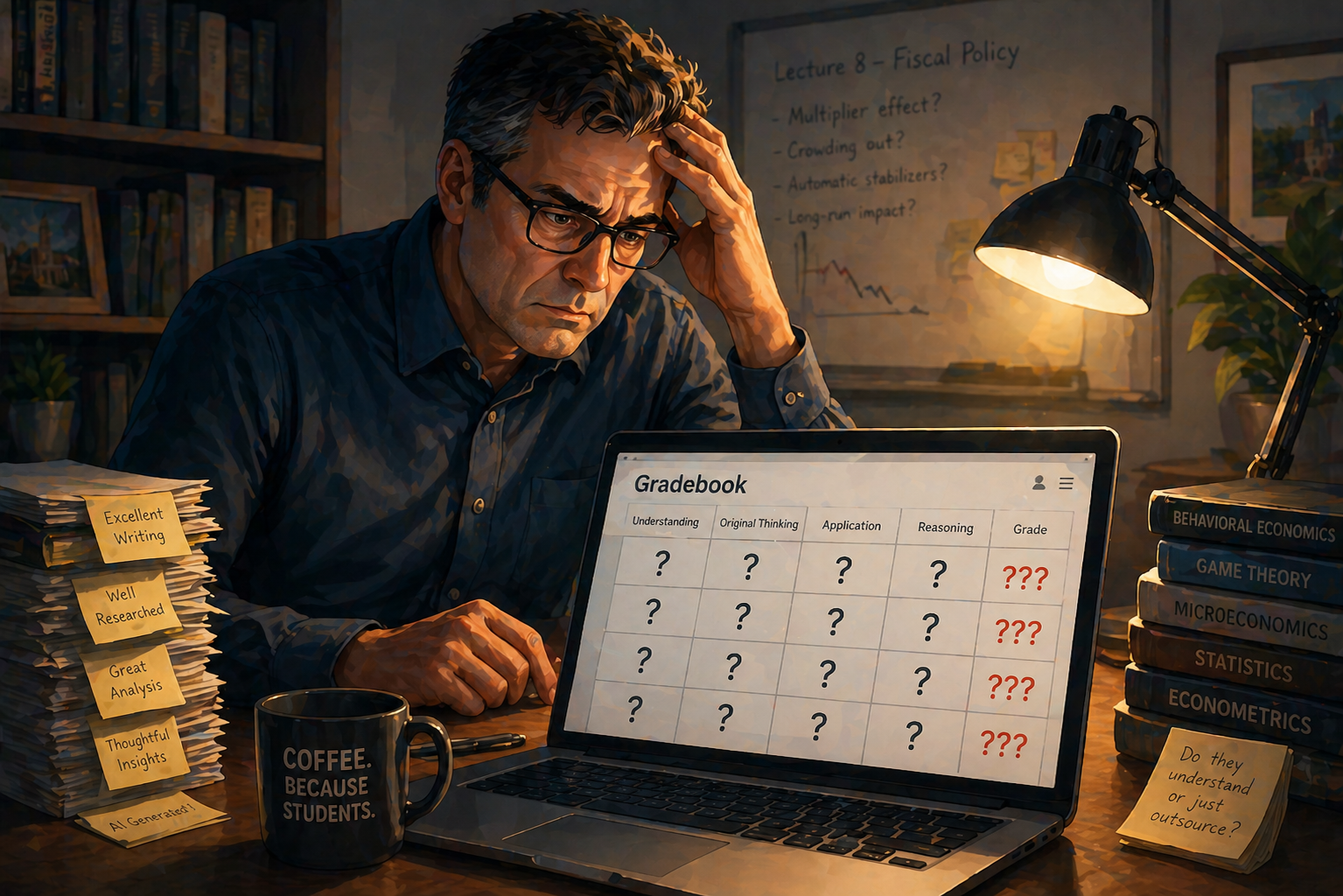

Since nobody is saying this out loud, we will. Professors have stopped trusting their own grades.

Not because they've gotten worse at teaching. Not because students have gotten lazier. Because the things they're grading no longer reliably tell them anything about what's happening inside a student's head. Written outputs, the submitted assignments, the problem sets, the case analysis.

None of it produces a reliable measure of a student's comprehension.

What This Actually Means

Think about it. A professor spends a career developing expertise in their field. They spend quality time designing assignments carefully. They read submissions closely. And then they sit with a finished paper in front of them, well-written and properly cited, and they genuinely cannot tell whether the person who submitted it understands a single concept in it.

"The problem isn't AI. It's a transparency issue."

This isn't a laughable, minor inconvenience. That's a collapse of the feedback loop that teaching runs on. How do you expect to iterate in the classroom and actually help students when you're flying blind?

What we heard repeatedly: professors are fighting to change their courses not because pedagogy demanded it, but because AI forced their hand. One professor from BYU-Idaho told us he'd started requiring students to submit a verbal explanation of their work alongside every paper. He was doing oral exams. Manually. For 50 students. Every assignment cycle.

Ironically he knew it wasn't sustainable. He was doing it anyway because he didn't have another option.

Another professor from Baylor University mentioned he's adding in-class writing. Reverting to pen and paper exams. Is AI so powerful that it just punches us back to the 90's? Is this as good as we can do?

Why Everything Being Done About It Is Wrong

The industry's response to this moment has been, to put it charitably, embarrassing.

AI detectors. Let's start there. They barely work today and 6 months from now will be complete rubbish. Every major study has confirmed it. They flag native English speakers as AI writers. They miss actual AI-generated text. They have produced false accusations against real students doing real work. Because the better you write, the more AI generated you look. Universities that built their AI integrity policies around these tools have already started quietly walking them back.

"Proctoring software is its own disaster. It flags. It treats every student as a suspect before they've done anything wrong. And it still doesn't tell you if the person staring at the camera understands the material."

It just tells you they were present.

The "reweighting approach" of just making the final exam worth more is the academic equivalent of rearranging furniture after a flood. The problem isn't the grading weights. It never has been. The problem is that the primary instrument of assessment has been compromised and nobody wants to say it plainly.

The Real Insight

Here's what those 40 conversations made clear. The problem isn't that students are using AI. The problem is that professors can no longer see thinking.

That distinction matters more than it sounds. This is extremely important. I'll have to write 50 pieces to communicate it.

If a student uses AI to help structure an argument but genuinely understands the underlying material, that's a different situation than a student who submitted work they cannot explain or defend. It's actually great.

Except right now it's not great, because professors cannot tell the difference between human and AI output. That's because it's identical.

The written assignment was never a perfect proxy for understanding.

It was a convenient one. We built an entire assessment infrastructure on the assumption that if someone could produce the right words in the right order, they probably understood the thing. That assumption held up reasonably well for a long time.

It does not hold up anymore.

The oral exam always knew this. That's why law schools, medical schools, and PhD programs never fully abandoned it. When you ask someone to explain their reasoning out loud, defend a position, respond to a follow-up they didn't anticipate, you'll find out very quickly what they actually know. Let alone the metacognition learning benefits of it.

The oral exam wasn't replaced because it was inferior. It was replaced because it didn't scale.

What Actually Needs to Happen

Professors need a way to see thinking again. That's the whole problem stated in one sentence.

It doesn't require surveillance. It isn't proctoring or detection. It doesn't require treating students like criminals. This is the wrong way to look at it. Instead, it requires asking them to explain themselves — the same thing good professors have always done, the same thing that separates real understanding from performed understanding.

What that looks like in practice: a student submits their work, and then they answer for it. They answer for it in the way that every professional is eventually asked to answer for their work. A doctor explains their diagnosis. A lawyer defends their argument. An engineer justifies their design choices. And now, a student articulates their work.

We have somehow constructed a university system where the one group of people never asked to defend their thinking is the students. It used to work, but it does not work today.

Universities owe their students a credential that means something. They owe employers trusted graduates who can actually do what the degree says they can do. This is largely at risk in itself, but that's a conversation for another day.

This doesn't require burning the curriculum down. It requires some thinking. A good start is by adding one thing back that should never have been removed.

The oral exam. At scale. For everyone.